Holacracy: The New Management System for a Rapidly Changing World

We are not ready for AI: our organizations, our companies, our society. Thanks to AI, everyone is becoming more powerful. But at the same time, we are putting chains on ourselves: rules for everyone and everything.

AI is an empowerment technology: it empowers people to make smarter decisions and take better actions. But it’s not enough to give people powerful tools. They must also have the authority to exploit them. However, our organizational structures are designed for control, not for change.

Decisions are delegated upwards because employees prefer to “save their own skin” and supervisors see themselves as controllers rather than enablers. Decisions are delegated away from action and information. Worse still, decisions are delegated upwards until they reach someone who has no information at all (remember the Peter Principle?), because decision-making becomes more difficult when you have more information.

If we want AI to reach its full potential, we need an organization that empowers people. The book “Holacracy: The New Management System for a Rapidly Changing World” advocates an alternative to bureaucracy that relies on principles of cooperation rather than on rules of control.

Interestingly, author Brian J. Robertson published the book back in 2015—long before AI disruption—but it couldn’t be more timely or relevant. It introduces an holistic management system that is perfectly suited to AI-powered organizations:

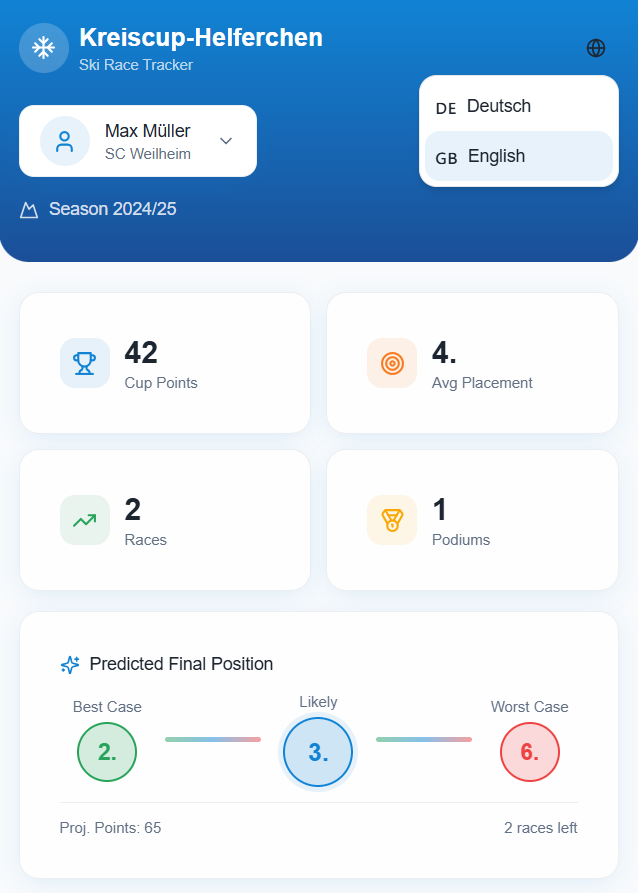

🎭 Roles, Not 👤 Persons: An organization is defined by the interaction of roles, not by individuals. A person can have multiple roles. And a role can be filled by an AI agent instead of a human being.

⭕ Circles, Not 🧱 Silos: Roles form so-called circles, i.e., teams that organize themselves dynamically, and act autonomously. In an AI Holacracy, a multi-agent system can form a circle.

⚛️ Holarchy, Not 📐 Hierarchy: Instead of a top-down chain of command, the circles form a dynamic network and build greater circles. This typology is known in agentic AI architectures as “Agent Network“.

🕸️ (Distributed) Autonomy, Not 👑 (Central) Authority: Each circle decides and acts independently to achieve its objectives and results … sounds like the definition for agentic AI.

✅ Consent, Not 🤝 Consensus: Don’t wait for everyone to say OK, just keep going until someone says stop. This is also the modus operandi of most AI agents: they proceed until the human-in-the-loop (HITL) says stop.

⚡ Tension, Not 🧊 Suspension: Differences of opinion within a circle and between circles are a good thing. The tension creates momentum. It is bad to freeze conflicts and suspend its resolution. Agentic AI design uses the Multi-Agent Debate (MAD) pattern to leverage this tension for better results.

📜 Constitution, Not 👔 CEO: The rules determine which roles can decide and do what. This also applies to the CEO. For AI agents, the rules are defined in the system prompt.

To unleash the power of AI, companies must first liberate themselves. Transformation is the change of organization. Anyone looking for an organizational model that is suited to the AI age will find Holacracy a perfect design fit. That is why it is one of the Best of Books for Business:

Responses